Another option is using a callable which returns a boolean value. Retry_exceptions ( list|callable) – a list of exceptions which will cause the transaction restart. By default Pony catches the TransactionError exception only, but this list can be modified using the retry_exceptions parameter. When this parameter is specified, Pony catches the TransactionError exception (and all its descendants) and restarts the current transaction. The decorated function should not call commit() or rollback() functions explicitly. This parameter can be used with the decorator only. Retry ( int) – specifies the number of attempts for committing the current transaction. When optimistic=False, no optimistic checks will be added to queries within this db_session (new in version 0.7.3) Usually there is no need to change this parameter. If you need to start a transaction on SELECT, then you should set immediate=True. SQLite, Postgres) start a transaction only when a modifying query is sent to the database(UPDATE, INSERT, DELETE) and don’t start it for SELECTs. Immediate ( bool) – tells Pony when start a transaction with the database. Can be useful with some web frameworks which trigger HTTP redirect with the help of an exception. Used for establishing a database session.Īllowed_exceptions ( list) – a list of exceptions which when occurred do not cause the transaction rollback. Transactions & allowed_exceptions=, immediate=False, optimistic=True, retry=0, retry_exceptions=, serializable=False, strict=False, sql_debug=None, show_values=None) Transaction isolation levels and database peculiarities.I dont think table scanning is necessary. Could someone explain to me what exactly is the bottleneck here? I see the sqlite.db-wal file growing to the full size of the migration (40GB), but again, that doesn't actually spell out a problem as far as I can tell. A column has a max BLOB size of 2Mib ( 1024 * 1024 * 2 bytes). Some additional context is that table being migrated ( media_chunk) is used to store chunks of files (the chunk column). The full transaction is below: import Sqlite3 from 'better-sqlite3'Ĭonst TIMESTAMP_SQLITE = `TIMESTAMP DATETIME DEFAULT(STRFTIME('%Y-%m-%dT%H:%M:%fZ', 'NOW'))`Ĭonst media chunks)`)ĪLTER TABLE media_chunk_new RENAME TO media_chunk ĬREATE INDEX media_chunk_range ON media_chunk (media_file_id, bytes_start) Ĭonsole.log() // just flush the last line of output There are no indexes on any of the tables, though there is a non null foreign key pointing at another table. insert into table_new select from table_old.select old data needed to set additional columns.

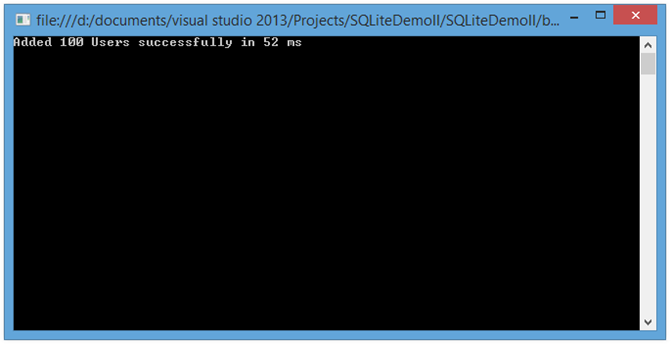

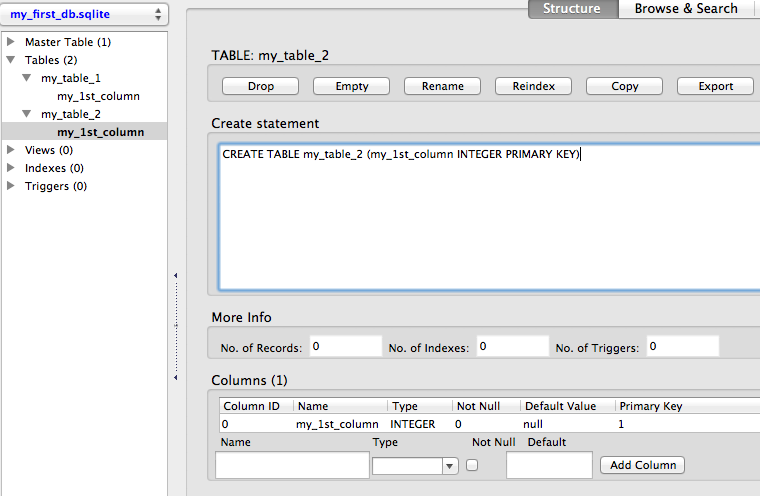

create new table w/ two additional columns.The migration is essentially adding two columns to a table. I am working with a fairly massive amount of data for a single transaction (~40GB, 20,000 rows), but I don't understand what is actually slowing down the inserts or selects. So in the process of writing a migration wrapped in a transaction for a local web app, I realized that the migration appears to perform inserts very fast when the transaction has a small number of inserts (on the order of 100 milliseconds per insert), but performs slower when the transaction gets larger (upwards of 4 seconds per insert).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed